pgpool-II 3.5 features: Difference between revisions

No edit summary |

|||

| (5 intermediate revisions by 2 users not shown) | |||

| Line 36: | Line 36: | ||

slowness of query execution through pgpool-II. | slowness of query execution through pgpool-II. | ||

[[File:why-extended-protocol-is-so-slow.png| | [[File:why-extended-protocol-is-so-slow.png|left|thumb|400px|Diagram to explain why extended protocol is slow]] | ||

=== Overcoming the slowness === | === Overcoming the slowness === | ||

[[File:extended-protocol-graph-3.5.png| | [[File:extended-protocol-graph-3.5.png|right|thumb|400px|TPS pgpool-II 3.5 vs. 3.4 in extended protocol]] | ||

Pgpool-II 3.5 overcomes the weakness in some cases. In streaming | Pgpool-II 3.5 overcomes the weakness in some cases. In streaming | ||

| Line 47: | Line 47: | ||

easy project because pgpool-II's architecure prerequisite that backend | easy project because pgpool-II's architecure prerequisite that backend | ||

responds each time when extended protocol messages was sent. The hard | responds each time when extended protocol messages was sent. The hard | ||

work is rewarded by significant performance improvement. Bench marking | work is rewarded by significant performance improvement. We conducted a bench marking test on two AWS EC2 instances (m4.large) running on Cent OS 6 with PostgreSQL 9.4 being installed. | ||

pgpool-II and pgbench run on a separate AWS EC2 instance (m4.large). | |||

Bench marking | |||

shows that pgpool-II 3.5 is 20% to 250% faster than 3.4. | shows that pgpool-II 3.5 is 20% to 250% faster than 3.4. | ||

| Line 90: | Line 92: | ||

pgbench repeatedly connects and disconnects to PostgreSQL, which results in poor performance. | pgbench repeatedly connects and disconnects to PostgreSQL, which results in poor performance. | ||

This shows why we need connection pooling. | This shows why we need connection pooling. | ||

== Watchdog enhancements == | |||

The watchdog is a sub process of pgpool-II, which provides high availability and automatic failover functionality between pgpool-II nodes. The watchdog process provides this functionality by monitoring two or more pgpool-II nodes and by promoting the standby node to master/active in case of a failure. The watchdog feature has been part of pgpool-II since version 3.2, and the pgpool-II 3.5 release has added some major enhancements to watchdog to make it more robust and resilient in handling failure scenarios. | |||

The enhancements also overcome most of the shortcoming and the problems of the existing watchdog features, the following shortcomings of the existing watchdog are addressed in pgpool-II 3.5: | |||

''• The watchdog now considers the quorum in the cluster. And only performs node escalation (acquire delegate-IP) when the quorum in the cluster is complete, This is how watchdog ensures that even in case of network partitioning or momentary split-brain the watchdog cluster keep working smoothly and the IP conflict situation should not happen, because of multiple not trying to acquire same virtual IP. | |||

• All pgpool-II nodes participating in the watchdog cluster make sure that the pgpool-II configuration of all nodes in the cluster is consistent so the user should not get any unwelcome surprise because of any configuration mistake at the time of node escalations. | |||

• Watchdog nodes now have configurable watchdog node priority (wd_priority), This will give the users more control on which node should become a leader node when the cluster elects the master node. | |||

• The third watchdog node health-checking (lifechecking), mode "external" along with already existing "query" and "heartbeat" is added to the watchdog. This new mode disables the internal lifecheck of pgpool-II watchdog and relies on external system to inform about node health status. Using this mode any external/3rd party node health checking system can be integrated with pgpool-II watchdog for lifechecking. | |||

• The new watchdog cluster always keeps exchanging the periodic beacon messages and keep looking for problems like split-brain syndrome and automatically recover from it. | |||

• Now user can provide scripts to be executed at time of escalation and de-escalation to master/leader nodes, This allows the pgpool-II watchdog to work on the different flavors of network (cloud platforms) where acquiring and releasing of virtual/elastic IP requires proprietary scripts/commands.'' | |||

==PCP system overhauling== | |||

The whole PCP system, both server and client side get a major overhaul in pgpool-II 3.5. Apart from the code refactoring that significantly improves the manageability and maintanibility of the of PCP library and front-end clients, the overhauled PCP adds the following new features. | |||

• The PCP communication protocol is enhanced and now more detailed PostgreSQL style ERROR and DEBUG messages can be delivered from the PCP server to the front-ends, This change not only makes the message style of the PCP system more in line with the rest of pgpool-II but also helps in making the user experience of PCP more enhanced by providing the user with detailed error and debug information. | |||

• Traditionally the PCP server was single process and was able to only process one command at a time, After the overhauling pgpool-II PCP server can now handle multiple simultaneous PCP commands. The exception to this is pcp_recovery_node, Only one recovery command is allowed to run at one time. | |||

• PCP process now considers the shutdown modes. Previously pcp_child didn't not care about if the mode "smart or fast" was provided in the shutdown request issued to it by pgpool-II process and used to always kill it-self instantly. The PCP in pgpool-II 3.5 considers the shutdown mode and when the smart shutdown is requested, it makes sure that the current executing PCP command is finished before proceeding with the shutdown. | |||

• PCP front-end commands now support long command line options. In previous versions, each PCP command only accepts the fixed number of command line options and in a fixed order. Overhauling of the PCP system in pgpool-II 3.5 enables all the PCP utilities to accept long command line options in any order and user can also omit the arguments for which they want to use the default values. | |||

• Old command format | |||

pcp_attach_node _timeout_ _host_ _port_ _userid_ passed nodded | |||

• New Command format | |||

pcp_attach_node [options...] node_id | |||

• Your PCP password is now safe with pgpool-II 3.5. PCP utilities used to accept PCP password as a command line argument, which was a big security flaw. That password in the command line could easily be captured using the ps command. Now in the overhauled version of the PCP system all the utilities get the password from password prompt or can also read it from the .password file. PCP client side uses the PCPPASSFILE environment variable which can be used to provide the patch of the .password file. | |||

== Importing PostgreSQL 9.5 parser == | == Importing PostgreSQL 9.5 parser == | ||

| Line 119: | Line 164: | ||

checking and the database used for streaming replication delay | checking and the database used for streaming replication delay | ||

respectively. | respectively. | ||

''show pool_nodes'' which briefly describes the status of each backend now shows how many SELECTs are issued to them. | |||

So you can quickly recognize the effect of load balancing for example. | |||

test=# show pool_nodes; | |||

node_id | hostname | port | status | lb_weight | role | select_cnt | |||

---------+----------+-------+--------+-----------+---------+------------ | |||

0 | /tmp | 11002 | 2 | 0.500000 | primary | 338230 | |||

1 | /tmp | 11003 | 2 | 0.500000 | standby | 163939 | |||

(2 rows) | |||

Latest revision as of 15:01, 17 December 2015

Introducing pgpool-II 3.5 new features

pgpool-II 3.5 is enhanced in many areas as previous major releases of pgpool-II. However this time pgpool-II enhances the performance in some use cases and this kind of changes are pretty rare in the pgpool-II history. Let's discuss performance of extended query protocol first.

Improved performance in extended query protocol

Extended query protocol is used in many applications written in various languages and APIs including Java and JDBC. One of the benefit of it is, it allows to reuse query plans to save planning time. The other benefit is it could make queries more secure. The extended query protocol allows to use parameters for query. Without this, applications need to generate query string by themselves and may introduce famous SQL injection attack.

Until pgpool-II 3.4, unfortunately it is not good at handling the extended query protocol. It is very slow when the extended query protocol is executed through pgpool-II.

Why extended protocol is slow anyway?

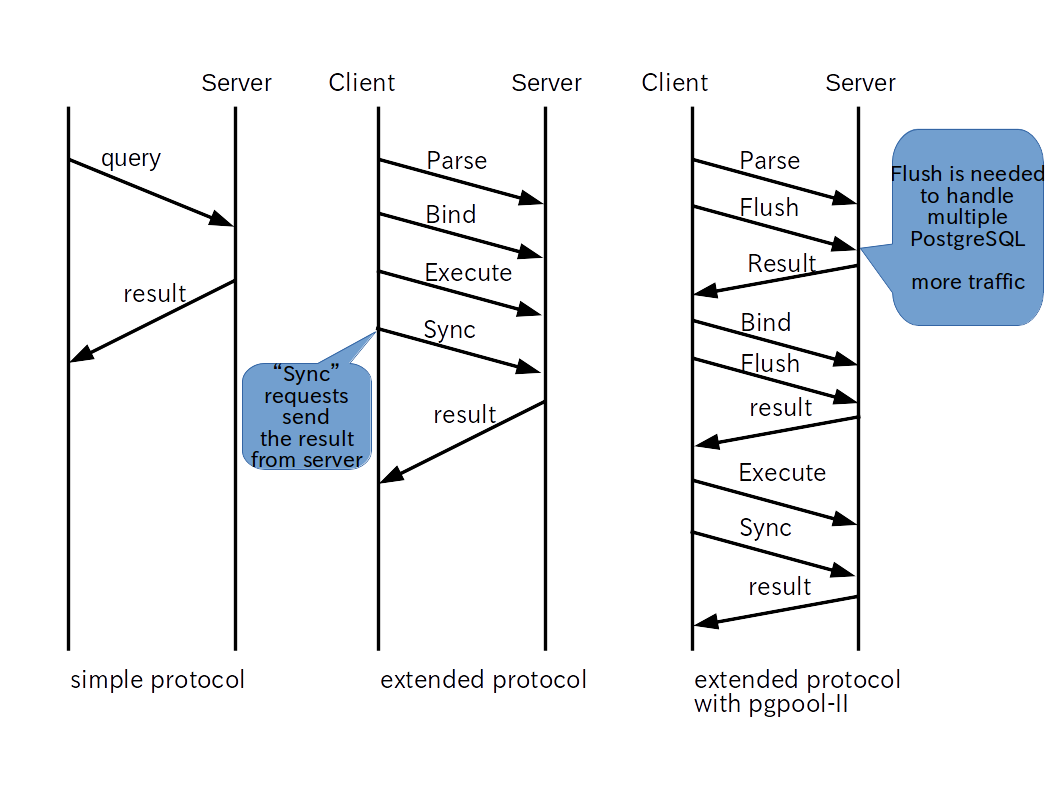

In the extended query protocol, queries are divided into several phases: Parse, Bind, Execute and Sync (there are more message types in the protocol. See PostgreSQL docs for more details). Parse message requests to create query plans. Bind message requests to attach parameters to the plan. Execute message requests to execute the query. Note that in each phase PostgreSQL does not respond the each result in the each phases. Rather, all of the responses are replied back to clients (including pgpool-II) at once.

However, pgpool-II needs to synchronize backend responses for internal processing. This is done by issuing additional Flush message in the each protocol phase, which requests response from backend. Problem is, this generates significant amount of network traffic and cause slowness of query execution through pgpool-II.

Overcoming the slowness

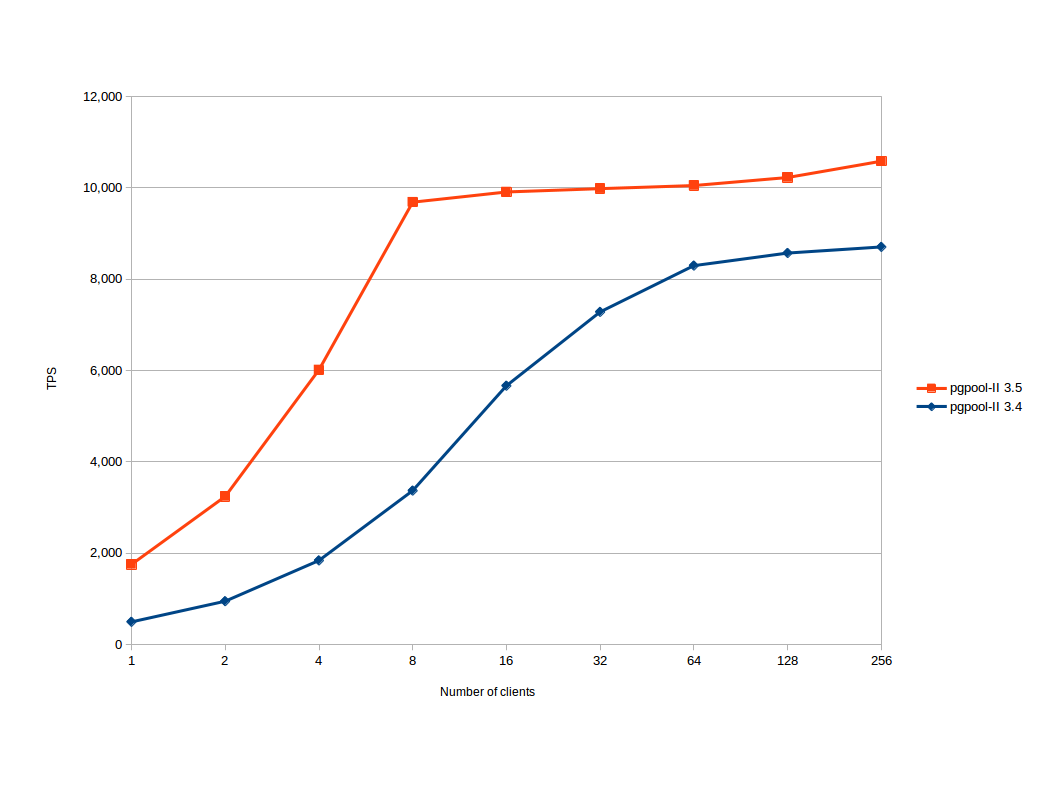

Pgpool-II 3.5 overcomes the weakness in some cases. In streaming replication mode, because pgpool-II does not need to sync each backend, pgpool-II 3.5 does not issue flush messages. This was not an easy project because pgpool-II's architecure prerequisite that backend responds each time when extended protocol messages was sent. The hard work is rewarded by significant performance improvement. We conducted a bench marking test on two AWS EC2 instances (m4.large) running on Cent OS 6 with PostgreSQL 9.4 being installed. pgpool-II and pgbench run on a separate AWS EC2 instance (m4.large). Bench marking shows that pgpool-II 3.5 is 20% to 250% faster than 3.4.

The performance of other mode (native replication mode and raw mode) remains same.

Overcoming the thundering herd problem

Pgpool-II has a problem called the thundering herd problem.

What is the thundering herd problem anyway?

The Wikipedia says:

The thundering herd problem occurs when a large number of processes

waiting for an event are awoken when that event occurs, but only one process is able to proceed at a time. After the processes wake up, they all demand the resource and a decision must be made as to which process can continue. After the decision is made, the remaining processes are put back to sleep, only to all wake up again to request

access to the resource.

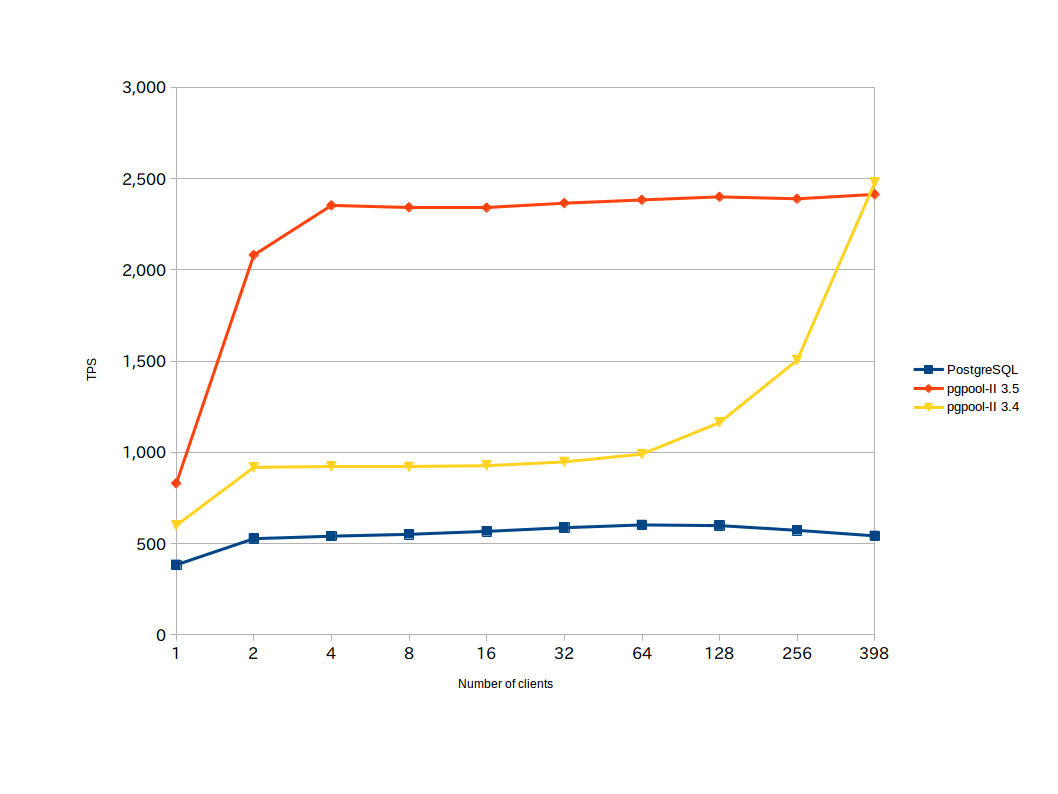

Pgpool-II forks off child process and wait for connection requests from clients. When a request arrives only one of them can accept the request. Other process just are awoken and sleep again. Pgpool-II 3.5 allow only one process to accept the request by using semaphore and never let other process awoken except the process which had been waiting for the semaphore. This eliminates the thundering herd problem. To enable the functionality, you must turn on the new parameter serialize_accept in pgpool.conf. Also child_life_time must be disabled (set to 0), which is due to an limitation of current implementation. Note that the method is most effective when num_init_children is large and the number of concurrent clients is relatively small. In our test case, pgpool-II 3.5 is 40% to 150% faster than 3.4.

The graph left shows how pgpool-II 3.5 putperforms 3.4. We set everything (pgpool-II, PostgreSQL and pgbench) on a laptop which has 16GB mem and 512 GB SSD, running Ubuntu 14.04. We start pgbench with -C option which repeats connect and disconnect to pgpool-II to simulate the thundering herd problem. Note that the blue line shows raw PostgreSQL TPS for comparison. pgbench repeatedly connects and disconnects to PostgreSQL, which results in poor performance. This shows why we need connection pooling.

Watchdog enhancements

The watchdog is a sub process of pgpool-II, which provides high availability and automatic failover functionality between pgpool-II nodes. The watchdog process provides this functionality by monitoring two or more pgpool-II nodes and by promoting the standby node to master/active in case of a failure. The watchdog feature has been part of pgpool-II since version 3.2, and the pgpool-II 3.5 release has added some major enhancements to watchdog to make it more robust and resilient in handling failure scenarios.

The enhancements also overcome most of the shortcoming and the problems of the existing watchdog features, the following shortcomings of the existing watchdog are addressed in pgpool-II 3.5:

• The watchdog now considers the quorum in the cluster. And only performs node escalation (acquire delegate-IP) when the quorum in the cluster is complete, This is how watchdog ensures that even in case of network partitioning or momentary split-brain the watchdog cluster keep working smoothly and the IP conflict situation should not happen, because of multiple not trying to acquire same virtual IP.

• All pgpool-II nodes participating in the watchdog cluster make sure that the pgpool-II configuration of all nodes in the cluster is consistent so the user should not get any unwelcome surprise because of any configuration mistake at the time of node escalations.

• Watchdog nodes now have configurable watchdog node priority (wd_priority), This will give the users more control on which node should become a leader node when the cluster elects the master node.

• The third watchdog node health-checking (lifechecking), mode "external" along with already existing "query" and "heartbeat" is added to the watchdog. This new mode disables the internal lifecheck of pgpool-II watchdog and relies on external system to inform about node health status. Using this mode any external/3rd party node health checking system can be integrated with pgpool-II watchdog for lifechecking.

• The new watchdog cluster always keeps exchanging the periodic beacon messages and keep looking for problems like split-brain syndrome and automatically recover from it.

• Now user can provide scripts to be executed at time of escalation and de-escalation to master/leader nodes, This allows the pgpool-II watchdog to work on the different flavors of network (cloud platforms) where acquiring and releasing of virtual/elastic IP requires proprietary scripts/commands.

PCP system overhauling

The whole PCP system, both server and client side get a major overhaul in pgpool-II 3.5. Apart from the code refactoring that significantly improves the manageability and maintanibility of the of PCP library and front-end clients, the overhauled PCP adds the following new features.

• The PCP communication protocol is enhanced and now more detailed PostgreSQL style ERROR and DEBUG messages can be delivered from the PCP server to the front-ends, This change not only makes the message style of the PCP system more in line with the rest of pgpool-II but also helps in making the user experience of PCP more enhanced by providing the user with detailed error and debug information.

• Traditionally the PCP server was single process and was able to only process one command at a time, After the overhauling pgpool-II PCP server can now handle multiple simultaneous PCP commands. The exception to this is pcp_recovery_node, Only one recovery command is allowed to run at one time.

• PCP process now considers the shutdown modes. Previously pcp_child didn't not care about if the mode "smart or fast" was provided in the shutdown request issued to it by pgpool-II process and used to always kill it-self instantly. The PCP in pgpool-II 3.5 considers the shutdown mode and when the smart shutdown is requested, it makes sure that the current executing PCP command is finished before proceeding with the shutdown.

• PCP front-end commands now support long command line options. In previous versions, each PCP command only accepts the fixed number of command line options and in a fixed order. Overhauling of the PCP system in pgpool-II 3.5 enables all the PCP utilities to accept long command line options in any order and user can also omit the arguments for which they want to use the default values.

• Old command format pcp_attach_node _timeout_ _host_ _port_ _userid_ passed nodded • New Command format pcp_attach_node [options...] node_id

• Your PCP password is now safe with pgpool-II 3.5. PCP utilities used to accept PCP password as a command line argument, which was a big security flaw. That password in the command line could easily be captured using the ps command. Now in the overhauled version of the PCP system all the utilities get the password from password prompt or can also read it from the .password file. PCP client side uses the PCPPASSFILE environment variable which can be used to provide the patch of the .password file.

Importing PostgreSQL 9.5 parser

Pgpool-II has a built-in SQL parser which is used for mainly query dispatching and load balancing. The parser is being imported from PostgreSQL. Pgpool-II 3.4's SQL parser was imported from PostgreSQL 9.4 and does not understand new syntax employed in PostgreSQL 9.5. Those queries the parser does not know are sent to the primary node and are never load balanced, which makes pgpool-II less effective when it needs to handle those queries.

Pgpool-II 3.5 imports the SQL parser from PostgreSQL 9.5 and it can understand new syntax introduced in PostgreSQL 9.5. Especially GROUPING SET, CUBE, ROLLUP and TABLESAMPLE, now can be be load balanced and can be used in query cache. Of course pgpool-II 3.5 understands PostgreSQL syntax used in 9.4 as well.

Also INSERT...ON CONFLICT and UPDATE tab SET (col1, col2, ...) = (SELECT ...) ... can now be properly handled in query rewriting in native replication mode.

Other new features

Some PassS systems including Heroku does not allow to use systems databases, i.e. postgres and template1. This prevent pgpool-II from using such that PassS. New parameters health_check_database and sr_check_database allow to specify the database used for health checking and the database used for streaming replication delay respectively.

show pool_nodes which briefly describes the status of each backend now shows how many SELECTs are issued to them. So you can quickly recognize the effect of load balancing for example.

test=# show pool_nodes; node_id | hostname | port | status | lb_weight | role | select_cnt ---------+----------+-------+--------+-----------+---------+------------ 0 | /tmp | 11002 | 2 | 0.500000 | primary | 338230 1 | /tmp | 11003 | 2 | 0.500000 | standby | 163939 (2 rows)